Classifying musical genre using Spotify audio features

2020-07-02

Last updated: 2020-07-17

Checks: 7 0

Knit directory: project/

This reproducible R Markdown analysis was created with workflowr (version 1.6.2). The Checks tab describes the reproducibility checks that were applied when the results were created. The Past versions tab lists the development history.

Great! Since the R Markdown file has been committed to the Git repository, you know the exact version of the code that produced these results.

Great job! The global environment was empty. Objects defined in the global environment can affect the analysis in your R Markdown file in unknown ways. For reproduciblity it’s best to always run the code in an empty environment.

The command set.seed(20200723) was run prior to running the code in the R Markdown file. Setting a seed ensures that any results that rely on randomness, e.g. subsampling or permutations, are reproducible.

Great job! Recording the operating system, R version, and package versions is critical for reproducibility.

Nice! There were no cached chunks for this analysis, so you can be confident that you successfully produced the results during this run.

Great job! Using relative paths to the files within your workflowr project makes it easier to run your code on other machines.

Great! You are using Git for version control. Tracking code development and connecting the code version to the results is critical for reproducibility.

The results in this page were generated with repository version 2eb4639. See the Past versions tab to see a history of the changes made to the R Markdown and HTML files.

Note that you need to be careful to ensure that all relevant files for the analysis have been committed to Git prior to generating the results (you can use wflow_publish or wflow_git_commit). workflowr only checks the R Markdown file, but you know if there are other scripts or data files that it depends on. Below is the status of the Git repository when the results were generated:

Ignored files:

Ignored: .Rhistory

Ignored: .Rproj.user/

Note that any generated files, e.g. HTML, png, CSS, etc., are not included in this status report because it is ok for generated content to have uncommitted changes.

These are the previous versions of the repository in which changes were made to the R Markdown (analysis/spotify.Rmd) and HTML (docs/spotify.html) files. If you’ve configured a remote Git repository (see ?wflow_git_remote), click on the hyperlinks in the table below to view the files as they were in that past version.

| File | Version | Author | Date | Message |

|---|---|---|---|---|

| Rmd | 2eb4639 | John Blischak | 2020-07-17 | Update Spotify analysis so that it matches how it will appear on the day of the tutorial. |

| html | 80f7ef5 | John Blischak | 2020-07-02 | Build site. |

| Rmd | 6c85c34 | John Blischak | 2020-07-02 | Add spotify analysis |

This analysis attempts to classify songs into their correct musical genre using audio features. It is inspired by the original analysis by Kaylin Pavlik (@kaylinquest) in her 2019 blog post Understanding + classifying genres using Spotify audio features.

spotify <- read.csv("data/spotify.csv", stringsAsFactors = FALSE)

dim(spotify)[1] 32833 15head(spotify) genre danceability energy key loudness mode speechiness acousticness

1 pop 0.748 0.916 6 -2.634 1 0.0583 0.1020

2 pop 0.726 0.815 11 -4.969 1 0.0373 0.0724

3 pop 0.675 0.931 1 -3.432 0 0.0742 0.0794

4 pop 0.718 0.930 7 -3.778 1 0.1020 0.0287

5 pop 0.650 0.833 1 -4.672 1 0.0359 0.0803

6 pop 0.675 0.919 8 -5.385 1 0.1270 0.0799

instrumentalness liveness valence tempo duration_ms artist

1 0.00e+00 0.0653 0.518 122.036 194754 Ed Sheeran

2 4.21e-03 0.3570 0.693 99.972 162600 Maroon 5

3 2.33e-05 0.1100 0.613 124.008 176616 Zara Larsson

4 9.43e-06 0.2040 0.277 121.956 169093 The Chainsmokers

5 0.00e+00 0.0833 0.725 123.976 189052 Lewis Capaldi

6 0.00e+00 0.1430 0.585 124.982 163049 Ed Sheeran

song

1 I Don't Care (with Justin Bieber)

2 Memories

3 All the Time

4 Call You Mine

5 Someone You Loved

6 Beautiful People (feat. Khalid)table(spotify[, 1])

edm latin pop r&b rap rock

6043 5155 5507 5431 5746 4951 spotify <- spotify[, 1:13]Split the data into training and testing sets. The training set should have 3/4 of the samples.

numTrainingSamples <- nrow(spotify) * 3/4

trainingSet <- sample(seq_len(nrow(spotify)), size = numTrainingSamples)

spotifyTraining <- spotify[trainingSet, ]

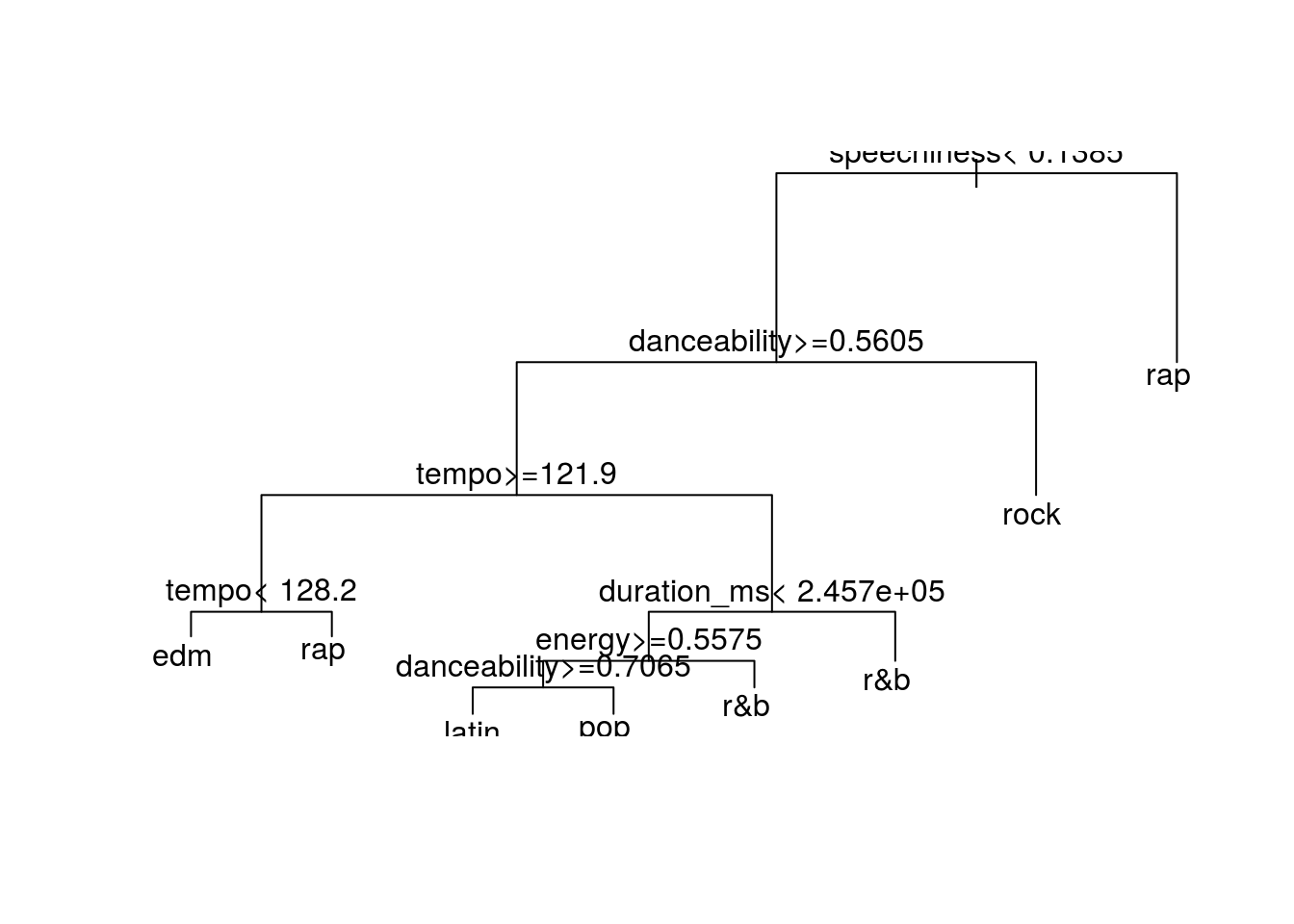

spotifyTesting <- spotify[-trainingSet, ]Build classification model with decision tree from the rpart package.

library(rpart)

model <- rpart(genre ~ ., data = spotifyTraining)

plot(model)

text(model)

| Version | Author | Date |

|---|---|---|

| 80f7ef5 | John Blischak | 2020-07-02 |

Calculate prediction accuracy of the model on the training and testing sets.

predictTraining <- predict(model, type = "class")

(accuracyTraining <- mean(spotifyTraining[, 1] == predictTraining))[1] 0.4036712predictTesting <- predict(model, newdata = spotifyTesting[, -1], type = "class")

(accuracyTesting <- mean(spotifyTesting[, 1] == predictTesting))[1] 0.4000487Evaluate prediction performance using a confusion matrix.

table(predicted = predictTesting, observed = spotifyTesting[, 1]) observed

predicted edm latin pop r&b rap rock

edm 615 129 220 62 33 67

latin 70 292 135 111 74 19

pop 77 120 224 86 36 94

r&b 71 171 165 359 140 157

rap 397 439 341 535 1047 178

rock 254 92 306 230 116 747How does the model compare to random guessing?

predictRandom <- sample(unique(spotifyTesting[, 1]),

size = nrow(spotifyTesting),

replace = TRUE,

prob = table(spotifyTesting[, 1]))

(accuracyRandom <- mean(spotifyTesting[, 1] == predictRandom))[1] 0.1612864

sessionInfo()R version 4.0.0 (2020-04-24)

Platform: x86_64-pc-linux-gnu (64-bit)

Running under: Ubuntu 16.04.6 LTS

Matrix products: default

BLAS: /usr/lib/atlas-base/atlas/libblas.so.3.0

LAPACK: /usr/lib/atlas-base/atlas/liblapack.so.3.0

locale:

[1] LC_CTYPE=C.UTF-8 LC_NUMERIC=C LC_TIME=C.UTF-8

[4] LC_COLLATE=C.UTF-8 LC_MONETARY=C.UTF-8 LC_MESSAGES=C.UTF-8

[7] LC_PAPER=C.UTF-8 LC_NAME=C LC_ADDRESS=C

[10] LC_TELEPHONE=C LC_MEASUREMENT=C.UTF-8 LC_IDENTIFICATION=C

attached base packages:

[1] stats graphics grDevices utils datasets methods base

other attached packages:

[1] rpart_4.1-15 workflowr_1.6.2

loaded via a namespace (and not attached):

[1] Rcpp_1.0.4.6 rprojroot_1.3-2 digest_0.6.25 later_1.1.0.1

[5] R6_2.4.1 backports_1.1.8 git2r_0.27.1 magrittr_1.5

[9] evaluate_0.14 stringi_1.4.6 rlang_0.4.6 fs_1.4.2

[13] promises_1.1.1 whisker_0.4 rmarkdown_2.3 tools_4.0.0

[17] stringr_1.4.0 glue_1.4.1 httpuv_1.5.4 xfun_0.15

[21] yaml_2.2.1 compiler_4.0.0 htmltools_0.5.0 knitr_1.29